Integrated systems advance data‑ready biologics workflows

Why software integration is the key to reproducible, data-driven biologics workflows

16 Apr 2026

Editorial article

James Chen, Founder and CEO, Velsson Technology

As biopharma workflows grow more complex and computationally demanding, the ability to deliver precise volumes is no longer sufficient to guarantee run-to-run reproducibility or generate the structured datasets that modern drug development demands. James Chen, Founder and CEO of Velsson Technology, explains why software-integrated automation is becoming as important as the instruments it sits alongside.

Antibody-drug conjugate (ADC) development is one of the most technically demanding workflows in biopharma R&D. Conjugation efficiency and drug-to-antibody ratio (DAR) are highly sensitive to molar ratios and incubation parameters, meaning that variability introduced during reagent preparation or reaction sequencing can have a direct impact on product quality and experimental reproducibility. In workflows with this level of interdependency between steps, dispensing accuracy alone is an insufficient safeguard.

Velsson Technology is a benchtop automation company specializing in integrated biopharma workflows. Founder and CEO James Chen argues that the gap between dispensing accuracy and true workflow reproducibility is one that many laboratories have been slow to recognize. "Dispensing accuracy is foundational, but in complex workflows it is only one variable among many," he says. "If a system delivers precise volumes but does not control sequencing, enforce timing consistency, or capture structured metadata, reproducibility can still suffer."

When precision is not enough

Over the past five years, automation platforms in biologics R&D have been asked to do significantly more than execute transfers. As drug discovery workflows have become increasingly iterative and computationally informed, the question has shifted from whether an instrument can perform a task to whether a system can maintain consistency across an entire workflow and generate usable data for downstream analysis.

This shift has exposed a gap that dispensing accuracy alone cannot close. Multi-step biologics workflows span antibody engineering, assay development, conjugation chemistry, and purification, and across each of these stages, variability can enter through inconsistent mixing, timing differences between reaction steps, or incomplete documentation of experimental parameters. "The goal is not only repeatable liquid transfers," says Chen, "but repeatable experimental conditions."

Orchestration as the missing layer

"Workflow management software transforms automation from a task executor into a coordination layer within the laboratory," explains Chen. Rather than performing isolated transfers, a software-integrated system orchestrates step sequencing, enforces protocol logic, and captures experimental context in real time.

"In multi-step workflows, the relationship between steps matters as much as the individual transfer," Chen continues. "Reaction incubation times, reagent order, and environmental conditions all contribute to experimental outcomes. Automation platforms must therefore manage the workflow holistically rather than operating as isolated liquid transfer devices. Precision without orchestration still leaves room for variability."

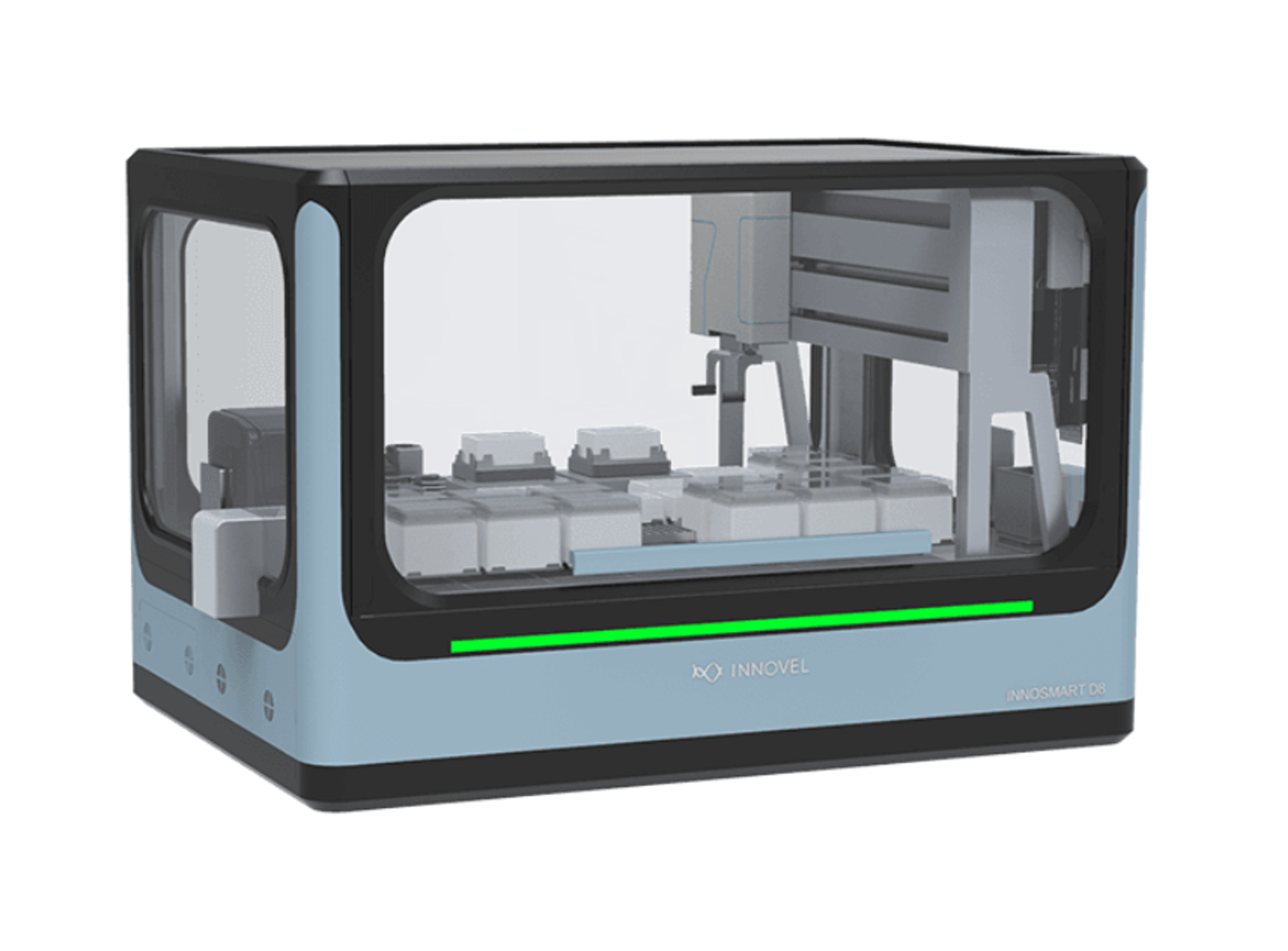

The Velsson INNOSMART® D8 was developed with this in mind. Pipetting precision across its dual 4-channel modules reaches a coefficient of variation (CV) of less than 0.5% above 300 µL, with automatic liquid level detection maintaining consistency across variable reagent volumes. Beyond the hardware, integrated protocol editing software supports version-controlled method building, audit trails, and recipe libraries, generating a structured record of how each run was executed rather than just its outputs.

The Velsson INNOSMART® D8 also connects directly with downstream instruments including plate readers, sealers, imagers, and shaker incubators, reducing the manual handoffs between steps where variability most commonly enters complex biologics workflows.

The data infrastructure argument

The data question, however, runs deeper than reproducibility alone. As AI-driven modeling and advanced analytics become more deeply embedded in biopharma development pipelines, the quality of data coming out of the automation layer directly determines how useful those tools can be.

"AI and advanced analytics rely on structured and consistent datasets," says Chen. "If experimental metadata — reagent volumes, timing sequences, protocol configurations — is incomplete, manually recorded, or inconsistently formatted, modeling reliability decreases." Therefore, laboratories need to think about data generation from the outset, choosing an automated system that produces machine-readable, parameter-tagged outputs that preserve experimental context, not just final results.

This means that the benchtop automation system must now be seen as part of the laboratory's broader data infrastructure, sitting alongside analytical instruments and laboratory information management systems (LIMS) rather than simply in service of them. "By generating structured, time-stamped, and parameter-tagged datasets, the automation layer becomes directly supportive of quality control, audit readiness, and computational analysis," Chen explains. "In this model, hardware precision and software orchestration are inseparable."

A different set of questions

For laboratory leaders selecting a liquid handling platform, the right questions look different than they did five years ago. Throughput and dispensing accuracy remain important, but they are no longer sufficient proxies for long-term platform value in complex biologics environments. Chen suggests the more useful questions are:

1. Does this system enforce protocol consistency across runs?

2. How is experimental metadata captured and structured?

3. Can workflows be version-controlled and replicated precisely?

4. Is the data export compatible with downstream analytics and AI modeling systems?

"Throughput is important," Chen acknowledges, "but long-term value is determined by reproducibility, traceability, and data integrity. In complex biologics workflows, those factors often outweigh raw speed."

For many biopharma laboratories, the conversation around AI readiness has focused on modeling tools and data science capability. Chen argues the starting point is earlier than that. "AI readiness begins at the experiment design and execution layer," he says. "When workflow definitions, timing, and reagent parameters are digitally captured, datasets become more suitable for modeling, optimization, and predictive analysis." Reproducibility, in other words, is not just a hardware problem; it is a systems problem. For teams looking to address it at source, the Velsson INNOSMART® D8 is designed to be a practical place to start.